Survey Solver

The Survey Solver node can be used to estimate camera motion using a set of feature tracks generated by a User Track, each having a pre-specified 3D position.

The Survey Solver node can have multiple inputs and multiple outputs, and it can solve for more than one camera at the same time provided the set of trackers have been tracked in each input clip.

When available, LIDAR data files can be imported and used to assist the survey solver. Individual trackers can be attached to LIDAR data points to set their survey coordinates.

Geometric objects can also be used to generate survey coordinates for each tracker. After importing a suitable geometric mesh object, it can be positioned in-front of the camera and survey coordinates can be generated for trackers by intersecting the mesh.

The survey solve process can be influenced by the user in several ways, such as specifying an initial frame to start from or providing a hint to how the camera is moving. Error graphs are available to assess which features are not being solved accurately.

The Survey Solver node can also be used to generate a survey text file containing the survey coordinates for individual trackers.

Overview

The Survey Solver works by examining the motion paths of tracking points and trying to work out suitable camera parameters (such as focal length) and a motion transformation that can explain the paths. Because trackers have so much influence over the camera solver, it is very important to use a set of good quality tracker points.

The survey solver is able to function when four or more trackers are tracked between adjacent frames, although using more then four trackers will often increase the accuracy of the solution, and will reduce the amount of error caused by noise in the tracker paths. Each tracking point should be tracked over multiple frames, and tracking points that exist over a long period are often those that provide most benefit to the solver.

Because survey positions must be measured on-set and are tied to specific image features, we recommend using a User Track node to generate the tracking points. X, Y and Z survey positions must be entered for each tracker. The coordinates for a single tracker can be copied into or pasted from the desktop clipboard by right-clicking in the tracker list and selecting the appropriate option from the popup menu.

In order to estimate the camera position accurately, tracking points must be placed on both foreground and background image features. This means that the parallax motion of the trackers can be used to estimate the position of the camera.

The survey solver works by first estimating the camera position at the initial frame, and then extended the solution outwards, adding more frames until the entire camera path is complete.

Once solved, trackers are shown in the Cinema window, coloured according to how well their projected position matches their 2D tracker locations. Trackers that match their 2D location well (with a projection error less than 1 pixel) are coloured green, trackers with projection errors less than 2 pixels are coloured orange, and trackers with projection errors larger than 2 pixels are coloured red. An error line is also drawn connecting the projected tracker position with its 2D tracker location.

Entering survey coordinates

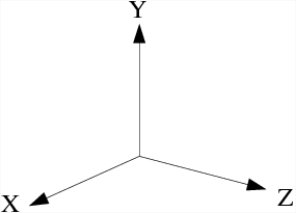

Internally, PFTrack uses a left-handed coordinate system with Y as the up vector:

Survey coordinates can be entered manually by double-clicking in the Survey X, Survey Y or Survey Z columns in the Tracker list.

Multiple survey coordinates can be imported from an ASCII file using the file format specified below:

# Name SurveyX SurveyY SurveyZ Uncertainty

"Tracker0001" 3.395012 -0.197068 -3.248246 0.010000

"Tracker0002" 15.167476 1.596467 6.355403 0.010000

"Tracker0003" 11.733214 5.690820 32.882875 0.010000

"Tracker0004" 12.141885 1.718063 33.046929 0.010000

"Tracker0005" 3.581708 0.131686 27.110810 0.010000

"Tracker0006" -1.715234 -0.079394 2.075065 0.010000

Depending on their source, the survey coordinates you have may not match this coordinate system. In this case, a new coordinate system can be specified using the Coordinate system menu beneath the tracker list before importing. For example, setting this to Right/Z will allow survey coordinates to be entered from a right-handed z-up source and converted automatically into PFTrack's internal coordinate system.

Generating Survey Coordinates

When a solved camera path viewing the same scene has been attached as an additional input to the Survey Solver node, the camera positions can be used to generate survey coordinates for trackers to help solve the primary camera in the same world-space.

For example, the Camera Solver node can be used to track one camera which is then attached as the second input to the Survey Solver node. Each tracker in the primary camera can then be positioned in two or more frames of the second input and triangulated to generate a survey coordinate. When the primary camera is then solved, its motion will be in the same world-space as the secondary camera.

Alternatively, the solved camera in the second input could be generated using an Photo Survey node and many reference photos of the set. In this case, the Photo Survey node would estimate the position of each reference frame and construct a 3D point cloud for the set. These camera positions can then be used in the Survey Solver node to generate survey coordinates for trackers in the same world space, and the moving camera can be tracked.

In order to generate survey coordinates for a tracker, one or more existing cameras must be marked as Already solved. Selected trackers must be located in two or more frames of the solved clip to triangulate their 3D position and update the survey coordinates for the tracker accordingly. The Set Position button allows a tracker to be manually placed in multiple frames of a secondary solved camera to generate survey coordinates in the same world-space. To remove a tracker from a frame in the secondary camera, hold the Shift key whilst clicking with the left mouse button.

LIDAR data

LIDAR data generated by a scanning system can be imported into the Survey Solver node and used to assign survey coordinates to tracking points.

LIDAR data can be imported from either ASCII .XYZ or .PTS data files containing the X, Y and Z coordinates of each point along with an optional RGB colour (specified using three 8-bit values), or using the binary FARO .XYB file format.

An example ASCII XYZ data file containing XYZ coordinates only:

# Comments are ignored

# X Y Z

0.66430000 6.59480000 18.94980000

0.76650000 6.57850000 18.94030000

1.10040000 6.54590000 18.99880000

0.56390000 6.60170000 18.90050000

0.58000000 6.59490000 18.88580000

0.61420000 6.59770000 18.90430000

An example ASCII XYZ data file containing XYZ coordinates and RGB colours:

# Comments are ignored

# X Y Z R G B

0.66430000 6.59480000 18.94980000 123 125 130

0.76650000 6.57850000 18.94030000 116 115 128

1.10040000 6.54590000 18.99880000 120 127 136

0.56390000 6.60170000 18.90050000 99 98 105

0.58000000 6.59490000 18.88580000 69 68 77

0.61420000 6.59770000 18.90430000 94 93 103

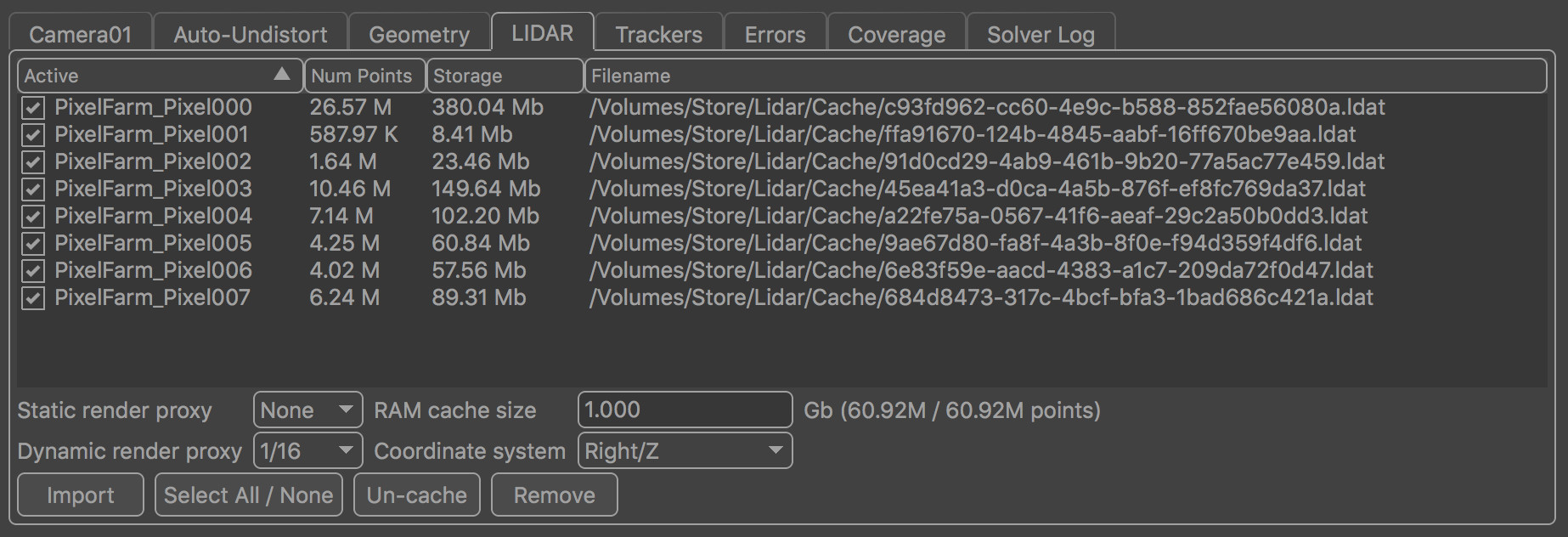

Importing LIDAR data

To import a LIDAR data file, the coordinate system for that data file must first be specified using the Coordinate system menu options of Left/Y, Left/Z, Right/Y or Right/Z which define a left or right-handed coordinate system and the Y or Z up direction. When importing more than one LIDAR data file, each file will be stored separately and can be enabled or disabled if required.

When LIDAR data is imported, it is first converted to an efficient binary data format. This is done to increase the speed at which the LIDAR data can be read from disk when required. Note that the conversion process can generate files that are many gigabytes in size (for example, importing 360 million data points will generate a file around 5 Gb in size).

The binary file generated by the conversion process can be shared between multiple Survey Solver nodes within the same project if required, so importing the same data file into additional nodes will not generate multiple copies of the binary file unless the location of the original data file changes.

By default, the binary file will be stored in the project's scratch

folder. This location can be changed in the Preferences. The default location is listed as project:/scratch, and when binary files are stored in this location, they can only be used by Survey Solver nodes within the current project.

Once the binary file has been generated, information about the number of LIDAR points available and the amount of disk space used will be displayed in the LIDAR table. The filename of each binary data file is also presented, and each data set can be enabled or disabled as required by ticking or un-ticking the box in the first column of the table.

Sharing LIDAR data between projects

If the storage location is changed to a location outside of the project folder before LIDAR data is imported, the binary files can be shared between projects. In this situation, they can be linked to a Survey Solver node by clicking the Import button, and then selecting the desired LIDAR data filename from the file browser. PFTrack will then search the external storage location to find the corresponding binary data file and link it to the project.

Note that when storing the binary data files outside of the project folder, PFTrack will never attempt to remove them from disk, and management of these data files (for example, removing them when they are no longer needed) is left up to the user.

Displaying LIDAR data

The Survey Solver will store LIDAR data points in RAM so they can be displayed in the UI. The amount of RAM that will be used to cache data points is specified using the RAM cache size edit box, measured in gigabytes (Gb). Changing this value will cause the LIDAR data points to be read from disk again and stored in RAM.

Note that it is not necessary to have enough RAM available to store the entire LIDAR data set: if you have limited memory available and a very large data set then only a fraction of the points will be stored in RAM to help you navigate around the scene.

When displaying the LIDAR data set, a reduced number of points can be rendered by changing the Static render proxy and Dynamic render proxy menus. These menus specify a proxy resolution (as a fraction of the number of LIDAR points stored in RAM) used to display a static view of the scene or a dynamic view (i.e. when navigating the camera around the scene).

Generating survey coordinates from LIDAR

Once LIDAR data has been process and stored in RAM, it can be used to generate survey data coordinates for tracking points. To do this, open a Viewer window and then select a tracker from the Trackers list.

When a tracker is attached, the LIDAR data will be searched to find the nearest point, and X, Y and Z survey coordinates will be generated for the selected tracker. Once survey coordinates have been found, a yellow box will be drawn around the coordinate point showing the search region that was used. If an incorrect point has been identified, it may be because the search area is too large.

If the Attach to RAM cached points only button is enabled, only those points cached in RAM will be searched, otherwise the entire LIDAR data set stored on disk will be searched (this will take significantly more time that just searching the points stored in RAM). Note that the render proxy settings do not affect the number of points being searched in RAM

Once survey coordinates have been generated for multiple trackers, the camera can be solved in the usual way as described in the rest of this document.

Geometry

Survey coordinates can be generated using a geometric object if required. To do this, a geometric object that matches part of the scene should first be created externally and then imported into PFTrack. Currently, Autodesk FBX, Alembic, and Alias Wavefront OBJ formats are supported.

Once imported, the geometric object must be aligned with camera in one frame. A ray passing through each tracker in one frame can then be intersected with the geometric object to generate survey coordinates. Note that in order to accurately align the object to the camera image, the camera focal length must be known and entered before hand. If the focal length is not know, the survey coordinates for each tracker will only approximate the true positions.

Geometry is stored in a left-handed coordinate system using Y as the up direction, but if the imported model differs from this, its original coordinate system and up direction can be specified in the Geometry controls, allowing the model to be converted automatically into left/y.

By default, the origin point (0, 0, 0) in the mesh's coordinate system will be used as the object's pivot point. This can be changed to the centre of the object's bounding box by clicking the Centre Pivot button.

Changing the render style

When positioning the camera, it is often useful to change the way the mesh is displayed on screen so it is easier to see how well the mesh motion is matching the underlying image data. The Render style option can be used to adjust how the mesh is displayed.

Positioning the object/camera

After geometry has been loaded, it must be positioned manually in one frame before survey coordinates can be generated.

The Transform mode option is used to specify an interaction mode for manually transforming the object/camera in either the Cinema or a Viewer window.

Generating survey coordinates from geometry

Once the camera has been positioned in one frame so the geometric object is correctly aligned with the image data viewed by the camera, survey coordinates can be generated for one or more selected tracking points.

The coordinates of the intersection point of a ray from the camera through each tracker position with the geometric mesh object are then used as the survey coordinates for the tracker. Initially, the uncertainty value for each tracker will be set at zero, although this value should be modified according to the accuracy of the 3D model and how well the camera has been positioned to view it.

Influencing The Solve

There are several steps that can be taken to influence the camera solve and refinement process, including:

Changing the initial frame used to start the solver.

Enter a known camera focal length, or range of focal lengths, if available. Note that in order to enter a focal length measured in a physical unit such as millimeters requires that the camera sensor/film back size is set correctly. Entering a known focal length will make it easier to solve for camera motion, and the solver will also run at a faster speed.

Disabling trackers that are not tracked well. This can also help when refining a camera solve, once a decent solution has been obtained and poorly solved trackers have been identified using the error graph.

Creating a hint for the camera motion. This can be done by placing an Edit Camera node up-stream from the Survey Solver node, and keyframing an approximate camera path (including camera translation and rotation). The path does not need to be keyframed at every frame, but should match the overall camera motion fairly well. It is often sufficient to only keyframe every 10 or 20 frames or so, assuming the camera is not moving too much in-between. A Test Object node can also be created to place objects and to help animating the camera hint.

Solver controls

Current clip: The clip that is being displayed in the Cinema window. The Camera control tab will display information for the camera associated with the current clip.

Current group: The tracker group that will be used to solve for camera motion.

Start/end frames: The start and end frames to use for the camera solve. The S button will store the current frame number as either the start or end frame.

Initial frame: The frames to start the camera solve at. The S button will store the current frame number.

Set initial frame automatically: When enabled, the initial frame will be set automatically by searching for a frame that contains enough trackers to estimate an initial camera position.

Solve un-surveyed trackers: When enabled, trackers that do not have survey coordinates will still be solved, provided enough surveyed trackers are available to solve for camera motion in the usual way.

Preview: When enabled, a preview of the solution at the initial camera frame will be generated. This can be used when adjusting the initial frame to determine which values perform best.

Solve All: Solve for camera motion. The camera solve can be run in the background by holding the Shift key whilst clicking on the Solve All button.

Refine All: Adjust camera motion to better match the tracker paths and survey locations. Refinement can be run multiple times, and a longer refinement can be ran by holding the Shift key whilst clicking on the button.

Display controls

Show ground: When enabled, the ground plane will be displayed.

Show horizon: When enabled, the horizon line will be displayed.

Show geometry: When enabled, the geometric objects will be displayed.

Show survey: When enabled, only trackers with survey coordinates will be displayed

Show names: When enabled, selected tracker names will be displayed.

Show info: When enabled, selected trackers will have position and residual error information displayed.

Show LIDAR: When enabled, LIDAR data will be displayed in the Cinema window.

Show LIDAR 3D: When enabled, LIDAR data will be displayed in the Viewer windows.

Show bound: When enabled, LIDAR data bounding box will be displayed in the Viewer windows.

Show frustum: When enabled, the camera frustum will be displayed in the Viewer windows

Centre View: When enabled, the Cinema window will be panned so the projection of the first selected tracker is fixed at the centre of the window.

Marquee: Allow a tracker selection marquee to be drawn in the Cinema window or in a Viewer window. Holding the Control key whilst drawing will ensure that previous selections are kept. Holding the Shift key will allow a lasso selection to be used instead of a rectangle.

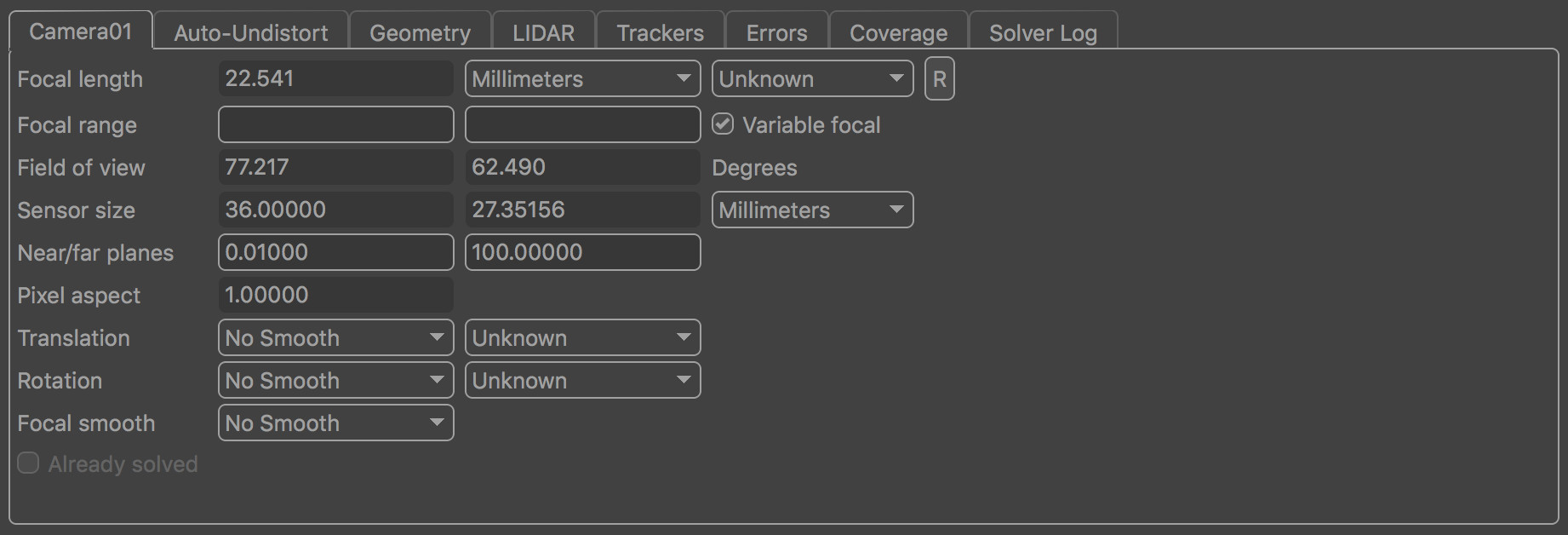

Camera controls

The camera control tab contains information about the camera associated with the current clip. If more than one input clip is present, the current camera (and clip) can be changed using the Current clip menu option.

Focal length: The camera focal length at the current frame. Focal length can be set as Known, Unknown or Initialised. If the focal length of the camera is known beforehand, entering the value here can often improve both the speed and accuracy of the camera solver. Setting focal length to Initialised will allow a minimum and maximum value to be specified in the Focal range edit boxes. For cameras with a constant focal length, setting focal length to Initialised will also allow an initial value to be entered into the focal length edit box. Note that in order to enter a focal length measured in any unit other than Pixels requires that the camera film back width and height is set correctly

Focal range: When Focal length is set to Initialised, these edit boxes define the minimum and maximum allowable values of focal length.

Variable focal: When enabled, the camera focal length will be allowed to vary throughout the clip. If focal length is set to Initialised, the minimum and maximum values over which focal length can vary may be entered into the Focal range edit boxes.

Field of view: The horizontal and vertical field of view at the current frame, measured in Degrees.

Sensor size: The horizontal and vertical sensor/film back size. To change sensor size, edit the camera preset values in the Clip Input node.

Near/far planes: The near and far clipping planes for the camera.

Pixel aspect: The current pixel aspect ratio. To change the pixel aspect ratio, edit the camera preset value in the Clip Input node.

Motion hints and constraints

The Translation and Rotation menus can be used to specify various hints and constraints for how the camera is moving. The first menu is used to control the amount of smoothing that is applied to either the translation or rotation components of motion. Smoothing options are None, Low, Medium and High.

The second menu can be used to specify either a constraint on the motion (for example, 'No Translation'), or indicate that a hint should be used. Translation constraints are as follows:

No Translation: the camera is not translation at all, and there is no parallax in the shot at all. Note that this option is rarely used, since it refers to the position of the camera's optical centre. Even when mounted on a tripod, the camera will still be translating slightly, since the centre of rotation (the tripod mount point) will not correspond exactly to the camera's optical centre.

Unknown: A freely moving camera

Off-Centre: A camera that is mounted on a tripod, rotating around a point slightly offset from the true optical centre

Small: A camera that translates a small distance compared to the distance from the camera to the tracking points

Linear: Restrict camera motion to a straight line

Planar: Restrict camera motion to a flat plane

Metadata hint: When available, use metadata in the source media to provide a hint to camera translation

Rotation constraints are similar, but are limited to No Rotation, Unknown and Metadata hint.

Focal smooth: Specify how smooth the camera focal length changes are for cameras with a variable focal length. Options are None, Low, Medium and High.

Already Solved: When enabled, the camera will be marked as already solved and will be available as a source for generating survey coordinates using the Set Position button.

Auto-Undistort controls

When the camera is set to use automatic lens distortion correction in the Clip Input node, these controls can be used to indicate roughly how much distortion is present in the clip.

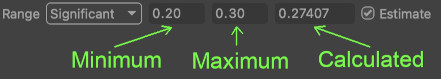

Range: This menu can be used to define the distortion range: Minimal, Moderate, Significant or Custom, where Minimal corresponds to a very small amount of distortion, and Significant corresponds to a wide-angle lens.

Lower and upper bounds on the distortion coefficient are provided on the right, which can be edited manually when in Custom mode.

Estimate: Enabling this option means the camera solver will attempt to estimate a suitable lens distortion coefficient during the camera solve.

After solving the camera, the image in the Cinema window will autmatically adjust in size to represent the full undistorted image. If desired, the size of the image can be fixed to match the original image by enabling the Crop to input image size option.

After solving, the actual distortion value calculated for the current frame is displayed to the right:

Handy Tip: if you aren't sure what distortion range to use, try guessing first, solving your camera and then look at the calculated distortion value. If it's at the maximum of your range this means PFTrack tried to increase it more but couldn't.

In this case, adjust the Range control upwards by one setting to increase the maximum allowable value, solve again, and see if that gives better results.

For example, if you set the Range to Moderate (0.1 to 0.2) and then solve your shot and the final distortion estimate is 0.2, try changing the Range to Significant and solving again to see if this gives a better result. You can always undo afterwards if the result isn't any better.

Geometry controls

Coordinate system: The geometry's original coordinate system. Used to convert the geometry into PFTrack's internal coordinate system, if necessary.

Render style: The style the mesh is rendered. Options are:

Wire-frame: Display the mesh using wire-frame outlines only.

Hidden-line: Display the mesh using hidden line wire frame.

Facet-shaded: Display the mesh using flat shaded facets for each triangle.

Smooth-shaded: Display the mesh using smoothly interpolated vertex normals.

Transparent: Display the mesh using a semi-transparent colour. The transparency can be adjusted by clicking the Mesh Colour button and changing the Alpha value.

Checkerboard: Display the mesh using a checkerboard texture. Currently, this is only possible if the imported mesh already has a UV map associated with it and it is imported using the Wavefront OBJ file format. The scale of the checkerboard texture can be adjusted by clicking the Checker +/- buttons.

Textured: Display the mesh in a Viewer window using projective texturing from the camera. In the Cinema window, hidden-line rendering will be used instead.

Transform mode: The interaction mode for manually transforming the object or camera. Options are:

None: This mode disables all manual interaction with the object or camera.

Fly: When this mode is enabled, the camera can be translated or rotated by holding the Option key and clicking and dragging with the right or left mouse buttons respectively in the Cinema window. Clicking and dragging with the middle mouse button will adjust the distance of the object from the camera.

Translate, Rotate, Scale: These interaction modes can be used in either the Cinema or a Viewer window, and will create a manipulator widget that can be used to translate, rotate or scale the object/camera. These manipulators are positioned at the object's origin (0.0, 0.0, 0.0) by default, or at the centre of the object's bounding box if the Centre Pivot button was pressed. Holding down the Control key whilst dragging will allow finer adjustments to be made. Note that when using a manipulator in the Viewer window, the object will be moved in 3D space, but using a manipulator in the Cinema window will move the camera around the object.

Import: Display a file browser to import a geometric mesh.

Generate Survey: Generate survey coordinates for selected trackers.

View: Position the camera so it is viewing the geometric mesh.

Centre Pivot: Move the transformation pivot of the mesh to the centre of the object's bounding box.

Flip Normals: Reverse the direction of imported geometry normals.

LIDAR controls

Static render proxy: The fraction of LIDAR data points stored in RAM that will be displayed from a static viewpoint.

RAM cache size: The maximum amount of RAM (measured in Gb) used to store LIDAR data in memory.

Dynamic render proxy: The fraction of LIDAR data points stored in RAM that will be displayed from a dynamic viewpoint (such as when the camera is navigating around the scene).

Coordinate system: The coordinate system of the original LIDAR data file as either left or right-handed with Y or Z as the up vector. Note that after changing the coordinate system, the LIDAR data file must be imported again. The default value is Right/Z.

Import: Import an LIDAR data file and convert it into PFTrack's internal binary format, storing the data file (which will be located in the project's scratch folder by default).

Select All/None: Select all or none of the data sets.

Un-cache: Remove the LIDAR data from RAM, and delete the binary file stored in the project's scratch folder. If the LIDAR data set is being used by other Survey Solver nodes in the project, a popup box will be displayed with a warning and ask for confirmation before the data is removed from RAM.

Remove: Remove the selected data sets (or all data sets if none are selected). If the LIDAR data set is being used by other Survey Solver nodes in the project, a popup box will be displayed with a warning and ask for confirmation. By choosing Remove Local, only the record of the LIDAR data set will be remove from the current node, keeping the binary data file intact. If Remove All is selected, records will be removed from all nodes and the binary data files will be removed from disk. Note that when storing the binary data files outside of the project folder, PFTrack will never attempt to remove them from disk.

Tracker controls

![]()

The Tracker List contains information about all trackers passed into the Survey Solver node. The list can be sorted by clicking on a column header. Trackers can be selected from the list by clicking with the left mouse button. Holding the Control key or the Shift key allows multiple selections to be made. Right-clicking in a survey coordinate column allows survey coordinates to be copied from and pasted into the desktop clipboard.

Columns

Name: The tracker name.

Survey: Indicates whether the survey point is active or not. To activate or de-activate multiple trackers at the same time, select them and click the Activate or Deactivate buttons on the right of the tracker list.

Survey X, Y and Z: The survey coordinates of each tracker in the coordinate system specified below the list. If a tracker is already solved, enabling the point will initialise the survey coordinate to the current 3D tracker position.

Uncertainty: The survey coordinate uncertainty. This value is used when survey coordinates have not been measured accurately. A non-zero uncertainty allows the 3D tracker position to change during the solve, limited to a small region around the survey coordinates. An uncertainty value of U means that the X coordinate of a tracker can vary between X-U and X+U, and similarly for the Y and Z coordinates.

Residual: The residual projection error (measured in pixels) for the tracker in the current frame. The projection error is the difference between the tracker path position and the projection of the 3D tracker point onto the camera plane. Ideally, the projection error should be close to zero for each tracker.

Distance: The distance from the current camera frame to the tracker's 3D position in space.

Controls

Coordinate system: The coordinate system used to display numerical survey coordinates in the tracker list, as well as when importing or exporting survey data or copying data to and from the clipboard. Note that this option has no effect on the survey coordinates displayed in the Cinema window, as these are always in the application's coordinate system which is Left-handed with Y-Up)

Import: Display a file browser allowing an ASCII text file containing survey coordinates to be loaded from disk. An example text file is given above.

Export: Display a file browser will be displayed allowing an ASCII text file containing survey coordinates to be saved to disk. An example text file is given above.

Attach: Allow the selected tracker to be attached to a LIDAR data point by clicking on a LIDAR point with the left mouse button in a Viewer window. Note that when navigating the camera in the perspective Viewer window, the camera can be rotated around its current position by holding the Option key and dragging with the left mouse button.

Search Size: The search size (measured in world coordinates) that will be examined when attaching trackers.

Attach to RAM cached points only: When enabled, only the LIDAR data points cached in RAM will be searched when attaching a tracker. When not enabled, the entire LIDAR data set will be searched on disk which may decrease performance. Note that the render proxy settings do not affect the number of points being searched in RAM.

All / None: Select all or none of the trackers from the list.

Show Survey: When enabled, only trackers with active survey coordinates will be displayed.

Activate: Activate all selected survey points. Survey points can also be activated individually by ticking the Survey column in the tracker list.

Deactivate: Deactivate all selected survey points. Survey points can be deactivated individually by un-ticking the Survey column in the tracker list.

Enable: Enable all selected trackers. Enabled trackers will be included in the solve and refine processes.

Disable: Disable all selected trackers. Disabled trackers will not be included in the solve and refine processes.

Set Uncertainty: Display a popup window allowing an uncertainty value to be assigned to all selected trackers at the same time.

Set Position: Allow a tracker to be placed in multiple frames of a secondary solved camera to generate survey coordinates in the same world-space. To remove a tracker from a frame in the secondary camera, hold the Shift key whilst clicking with the left mouse button.

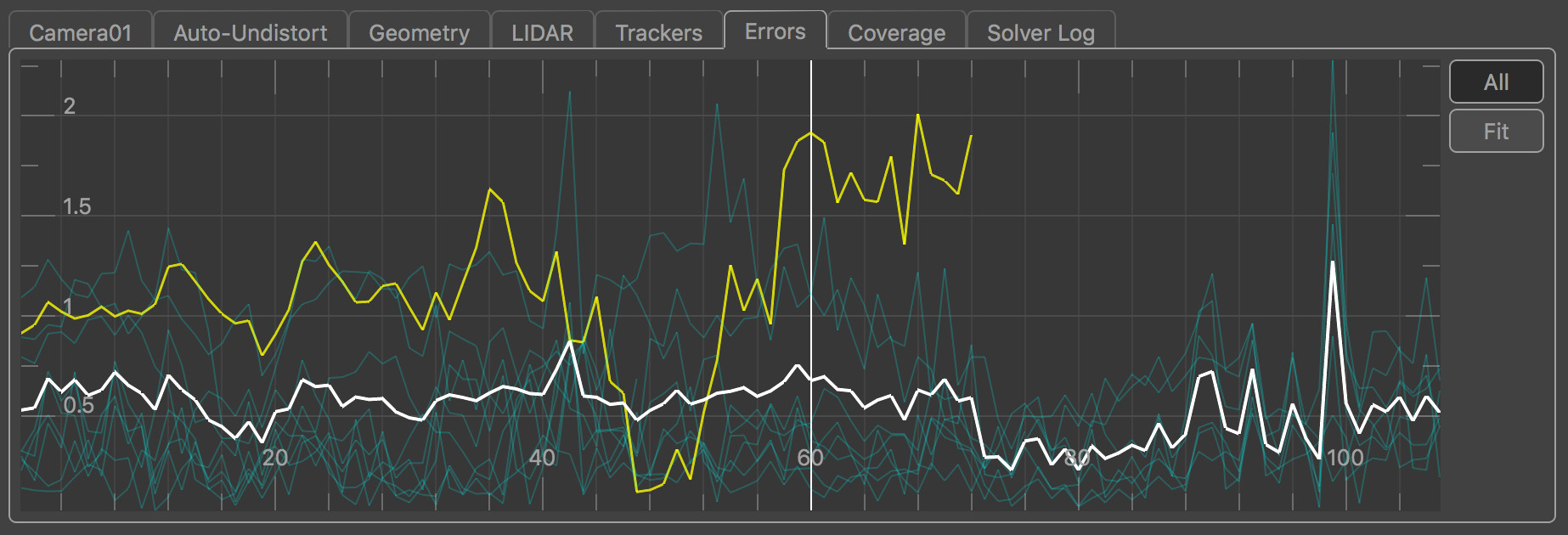

The Error Graph

The error graph plots the projection error (measured in pixels) for each tracker in each frame, along with the average projection error for all trackers visible in a frame. The projection error is the difference between the tracker path position and the projection of the 3D tracker point onto the camera plane. Ideally, the projection error should be close to zero for each tracker. Selected trackers are shown in yellow, and unselected trackers are shown in blue. The average projection error graph is shown in white. The error graph can be translated and scaled by clicking and dragging with the right or middle mouse buttons.

Individual trackers can be selected from the graph by clicking with the left mouse button. When a tracker is selected, the current frame will change to match the frame number that was clicked in the graph. If the Centre View display option is also enabled, the Cinema window will be panned to display the tracker projection in the centre.

A tracker may have a large projection error for several reasons. If a tracker is has a large projection error in most frames, it often means that the tracker path does not correspond to a fixed point in 3D space. This can be caused by a tracker being positioned over a "virtual corner" (i.e. an image feature that looks like a corner in the image, but is formed by the intersection of edges in the scene at different distances from the camera. As the camera moves, the apparent intersection of the edges changes). In these cases, the tracker should probably be de-activated.

If a tracker is tracked accurately but has a projection error that increases significantly in certain frames, it often indicates that the camera position in those frames is incorrect. Adding more trackers to the solution can often help out here.

Show All: When enabled, error graphs will be shown for all trackers, otherwise graph will only be shown for selected trackers.

Fit View: Scale and translate the error graph so all graphs are visible.

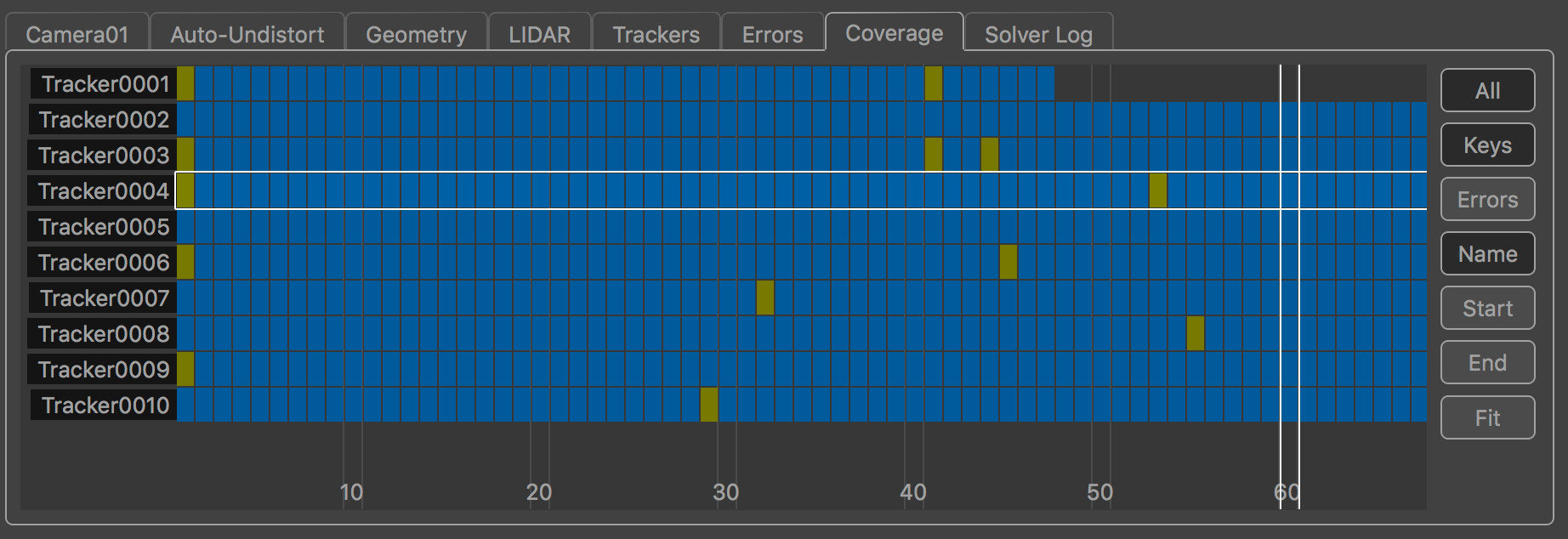

The Coverage Panel

The Coverage Panel displays information about the frames in which each tracking point has been tracked:

This can be used to evaluate how well tracking points are distributed throughout the clip, which will help to provide an accurate camera solve without any jumps in the camera path.

Coverage Keys display

By default, the Coverage Panel displayed keyframe information showing how tracking points have been positioned and tracked. Each frame in which the tracker is present is shown with a blue square. Yellow squares show frames in which manually generated tracking points were keyframed.

Frames in which the tracker is visible but has not been positioned are displayed in dark red. It is important to ensure that tracking points have been positioned in all frames in which they are visible, as this can significantly affect the accuracy of the camera solve.

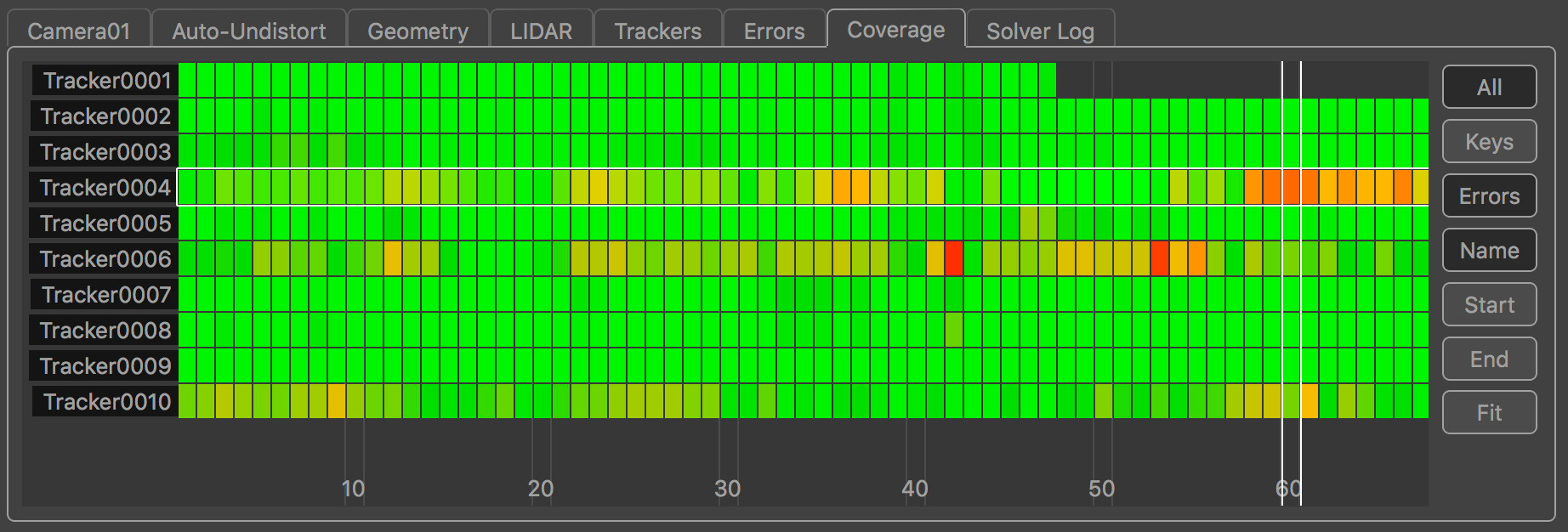

Coverage Error display

Alternatively, the Coverage Panel can also display the projection error for each tracker by clicking the Errors button. This switches the colour-coding of each indicator to show the error of the solved tracking point in each frame. This is colour-coded to show green for less than 0.5 pixels error, yellow for 1.5 pixels and red is greater than 2.5 pixels.

Coverage Panel controls

The Coverage Panel can be panned horizontally or vertically by clicking and dragging with the right mouse button. Clicking and dragging with the middle mouse button will zoom either horizontally or vertically to increase the number of tracking points and frames that are displayed in the panel.

The mouse wheel can also be used to zoom horizontally, or vertically if the Option key is held.

Clicking on an indicator with the left mouse button will select the tracking point and display that frame in the Cinema window. Holding the Control key will allow multiple tracking points to be selected.

Double-clicking on an indicator with the left mouse button will select the tracking point and immediately switch to display the node which generated that tracking point. This can be used to quickly jump to a User Track node to manually adjust a tracking point to correct a tracking error.

All: Switch between displaying all trackers, or only those trackers visible in the current frame.

Keys: Display keyframe information, showing where targets have been tracked and manually positioned.

Errors: Display projection error information.

Name: Sort the tracking points by name, in alphabetical order.

Start: Sort the tracking points according to the first frame in which they are tracked.

End: Sort the tracking points according to the last frame in which they are tracked.

Fit: Fit the tracking points display to the window. This will zoom in or out as necessary, displaying as many tracking points and frames as will fit in the viewport.

Default Keyboard Shortcuts

Keyboard shortcuts can be customised in the Preferences.

|

Set Initial Frame |

Control+A |

|

Solve All |

Shift+S |

|

Refine All |

Shift+R |

|

All/None Trackers |

Shift+L |

|

Show Survey |

Shift+H |

|

Activate |

Shift+A |

|

Deactivate |

Shift+D |

|

Enable |

Shift+B |

|

Disable |

Shift+N |

|

Set Position |

Shift+W |

|

Show Ground |

Control+G |

|

Show Horizon |

Control+H |

|

Show Geometry |

Control+E |

|

Show Names |

Control+N |

|

Show Info |

Control+I |

|

Move Pivot |

Shift+P |

|

Marquee |

Shift+M |

|

Centre View |

Shift+C |

|

All Errors |

Shift+E |

|

Fit |

Shift+F |

|

Attach |

Shift+T |

|

Show LIDAR |

Control+L |

|

Show LIDAR 3D |

Control+K |

|

Fly mode |

Shift+G |

|

Translate mode |

Shift+H |

|

Rotate mode |

Shift+J |

|

Scale mode |

Shift+K |

|

Next Clip |

C |

Alias|Wavefront is a trademark of Alias|Wavefront, a division of Silicon Graphics Limited in the United States and/or other countries worldwide.

FBX is a registered trademark of Autodesk Inc. in the USA and other countries.

Alembic is trademark of and copyright 2009-2015 Lucasfilm Entertainment Company Ltd. or Lucasfilm Ltd. All rights reserved. Industrial Light & Magic, ILM and the Bulb and Gear design logo are all registered trademarks or service marks of Lucasfilm Ltd. Copyright 2009-2015 Sony Pictures Imageworks Inc. All rights reserved.