Clip Input

Overview

The Clip Input node is used to load media shot by movie cameras, and define the parameters of the clip and camera that are passed down the tracking tree for matchmoving.

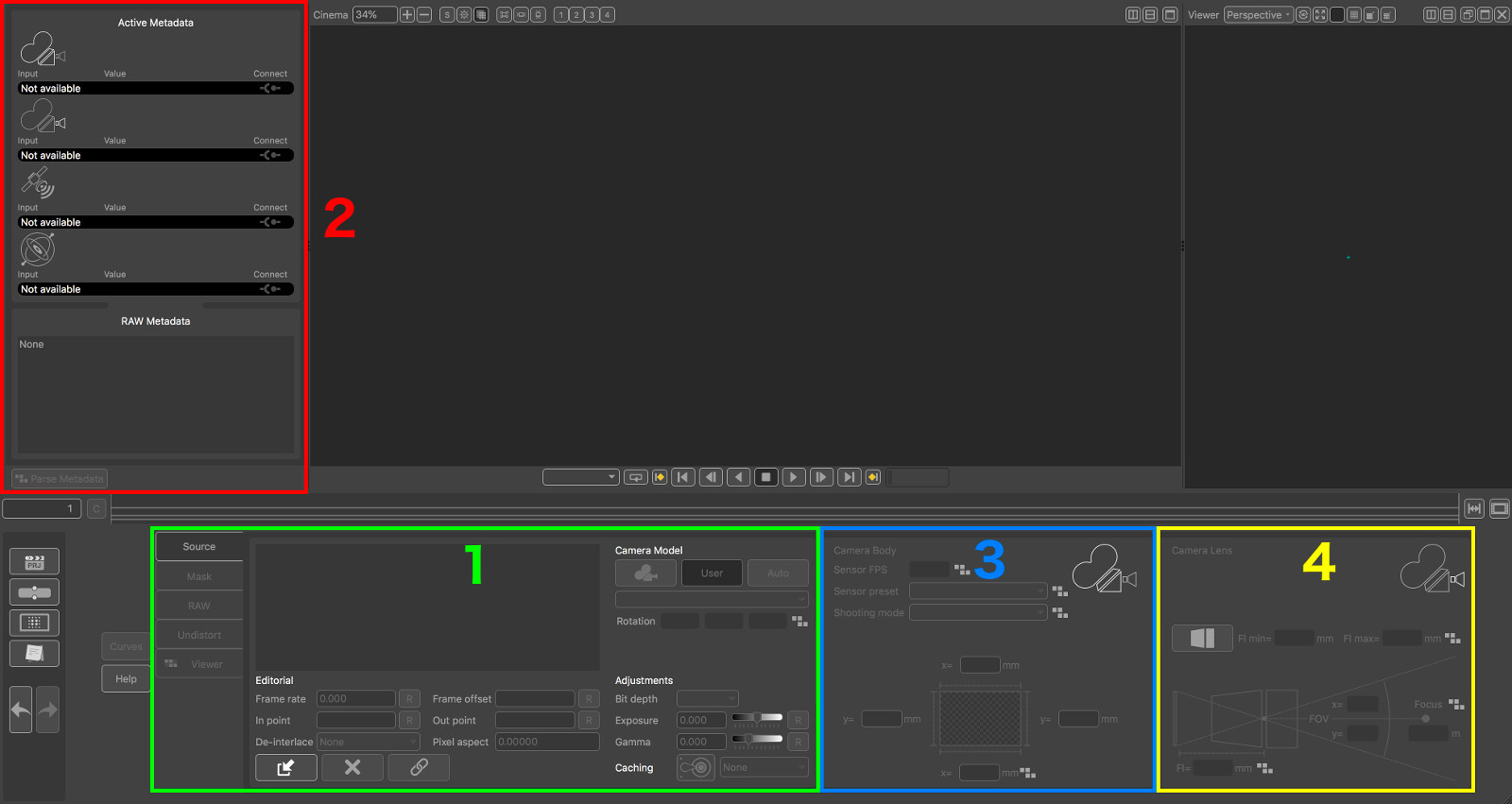

![]()

Along with the media thumbnail and the node name (which defaults to that of the loaded media) (1), this icon also indicates whether metadata is available to use in the media (1), and whether the media has been cached to disk (3).

When a Clip Input node is activated by double-clicking on it with the left mouse button, the PFTrack GUI will switch to display the Cinema Window, a single Viewer Window and the following important areas:

(1) The Clip Input Parameters:

(2) The Metadata window

(3) The Camera Body panel

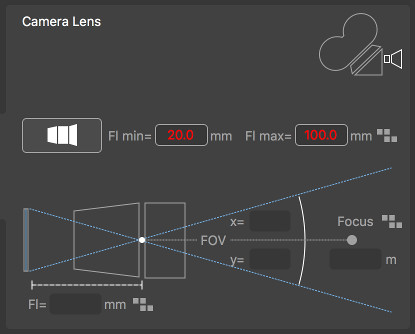

(4) The Camera Lens panel

The Clip Input Parameters are split into separate sections, selected by clicking the section tab on the left of the panel.

Source: The source media information. This is also where media can be loaded from disk.

Mask: The mask panel where media containing a pre-rendered mask can be loaded from disk.

RAW: The RAW panel where parameters for decoding RAW media image data can be adjusted.

Undistort: The Undistort panel where lens distortion correction parameters can be defined.

Metadata Viewer: The dynamic metadata viewer panel, showing metadata curves for any compatible metadata parameters.

Virtual Camera Model

In order to perform matchmoving tasks, PFTrack must create a Virtual Camera Model that matches the real camera as closely as possible. The tools available in the Clip Input node must be used to create this virtual camera model before the camera can be tracked. This includes, amongst other things:

The camera focal length

The camera sensor size

The type of lens distortion camera

This information may be available as metadata in your media files, or may be specified manually. If no information is available, PFTrack can also attempt to estimate the data automatically.

The following sections describe how media can be loaded and the parameters of the virtual camera model set, with or without metadata.

Source

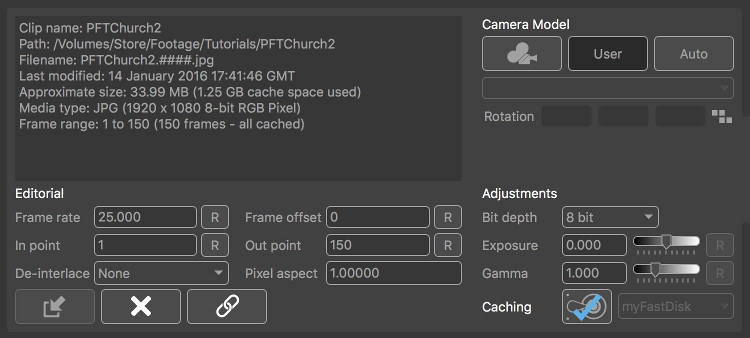

The source panel is where media can be loaded, and also lists important information about the source material such as its location on disk, frame numbering and duration.

The Camera Model buttons are used to specify the type of camera model used for the media. Further details are given below in the Camera section.

Loading and removing media

Load Media: Clicking this button will display the File Browser where the media can be located on disk and loaded into the node.

Load Media: Clicking this button will display the File Browser where the media can be located on disk and loaded into the node.

Remove Media: Once media has been loaded, it can be removed if required by clicking this button. Note that media must be removed from the node before the node can be deleted from the tracking tree. This will remove the media from the Clip Input node, but will not affect any of the original media files on disk.

Remove Media: Once media has been loaded, it can be removed if required by clicking this button. Note that media must be removed from the node before the node can be deleted from the tracking tree. This will remove the media from the Clip Input node, but will not affect any of the original media files on disk.

Relink Media: If the location of media files on disk has changed since it was imported, the Cinema window will display Missing Media. Clicking this button will display the File Browser allowing the original source material to be found. Note that the resolution and frame count must be the same as the original clip in order for it to be re-linked. If you wish to change the resolution or frame count of a clip, use the Replace Footage node.

Relink Media: If the location of media files on disk has changed since it was imported, the Cinema window will display Missing Media. Clicking this button will display the File Browser allowing the original source material to be found. Note that the resolution and frame count must be the same as the original clip in order for it to be re-linked. If you wish to change the resolution or frame count of a clip, use the Replace Footage node.

Clip parameters

These controls are available to adjust the representation of the clip in PFTrack (Note that these will not affect the original media files on disk in any way).

Frame rate: The number of frames-per-second for the clip. Clicking the R button will reset the frame rate back to the default value for this clip.

Frame offset: This can be used to offset the frame number that is read from the clip. For example, setting frame offset to +10 will mean that frame 1 of the clip displays frame 11 (1+10) of the source material.

In point: Specify the first frame of the clip to use. Clicking the R button will reset the in point back to the original value.

Out point: Specify the last frame of the clip to use. Clicking the R button will reset the out point back to the original value.

De-interlace mode: The de-interlacing option to use for the clip. Replicate upper/lower will replicate new scanlines from either the upper or lower fields. Interpolate upper/lower will interpolate new scanlines from either the upper or lower fields. Blend will blend both upper and lower fields together. Temporal Blend will use motion analysis to align the upper and lower fields before blending. Field Separation will separate out the upper and lower fields and scale them up to fill separate frames, doubling the length of the clip.

Pixel aspect: Displays the pixel aspect ratio for the clip.

Bit depth: This menu can be used to change the bit-depth of the media displayed in PFTrack. This can be used, for example, to change from 16-bit to 8-bit pixels and reduce the amount of RAM used to cache media.

Exposure: The exposure adjustment (measured in f-stops) to the image data. The R button will reset this back to the default value, which is 0.0.

Gamma: The gamma adjustment applied to the image data. The R button will reset this back to the default value, which is 1.0.

Disk caching

If media is being loaded from an external resource (such as a network-attached drive), read performance may be reduced when compared to reading data directly from a local hard disk. In these situations, a Disk Cache can be created in the System Preferences window to improve performance. Further information in how to create a Disk Cache is provided in the Preferences section.

: Clicking this button will render the media to the cache location specified in the cache menu.

: Clicking this button will render the media to the cache location specified in the cache menu.

Once the media is cached, the button icon will change to show that the media has been cached successfully:  . Clicking the button again will clear the media from the disk cache and free the disk space used.

. Clicking the button again will clear the media from the disk cache and free the disk space used.

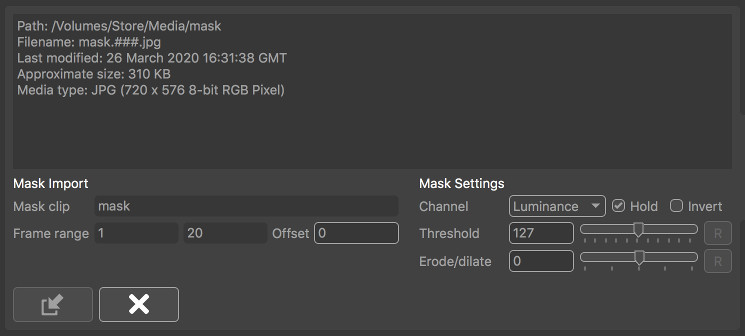

Mask

The Mask panel is where image-based masks can be loaded and applied to the clip. By default, the source material for the mask will be scaled to fit both the clip resolution and the number of frames. Once an image-based mask is loaded, it will be passed down-stream automatically to each node which can make use of it.

Note in addition to image-based masks, both Roto and Keyer masks can also be generated and connected to any node down-stream from the Clip Input node.

: Open the File Browser to load a mask clip.

: Open the File Browser to load a mask clip. : Clicking this button will remove the mask from the Clip Input node (Note this will not affect any of the source material files on disk).

: Clicking this button will remove the mask from the Clip Input node (Note this will not affect any of the source material files on disk).Mask clip: This displays the filename of the mask clip when loaded.

Frame range: This displays the first and last frame of the mask source material.

Offset: Shift the source material forwards or backwards by a specified number of frames.

Channel: Specify which image channel is used to create the mask from the source material. Options are Red, Green, Blue and Alpha to select one image channel, or Luminance to calculate pixel luminance.

Hold: When enabled, frames outside of the original source material range will be clamped, so the first and/or last frames of the source material will be held. When disabled, frame outside the original range will not display any mask.

Invert: Invert the mask in the clip.

Threshold: This specifies the pixel threshold (measured as a 8-bit value in the range 0..255) which is used to convert a grey-scale image into a binary mask. Pixel values less than the threshold are set to be transparent, and values greater are set to be opaque.

Erode/dilate: Erode or dilate the mask. Positive values will dilate the mask, whilst negative values will erode.

Once an image-based mask is loaded, it can be used downstream in any node which supports other types of mask. Where supported, the masks in the node can be toggled on or off as required, or inverted if necessary by using the mask button  in the node's control panel. This button can be toggled between three states:

in the node's control panel. This button can be toggled between three states:

: Indicating that any masks connected to the node should be used

: Indicating that any masks connected to the node should be used : Indicating that any masks connected to the node should be used, but they should be inverted first of all

: Indicating that any masks connected to the node should be used, but they should be inverted first of all : Indicating that any masks connected to the node should be ignored.

: Indicating that any masks connected to the node should be ignored.

RAW

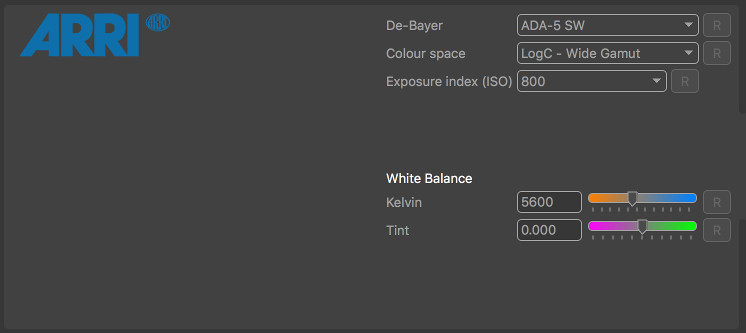

The RAW panel contains parameters used to de-bayer RAW media clips and produce an RGB image. The controls in this panel are specific to the type of media loaded, and are only available for ARRI RAW and RED R3D media.

ARRI RAW Decoding

When reading ARRI RAW footage, the de-bayering algorithm used to convert ARRI RAW footage to an RGB image can be specified using the De-Bayer menu. Note that currently, only CPU-based decoding methods are supported. The colour space, ISO exposure index, White balance temperature and green/magenta tint will also be read automatically from the source media, although these values can be overwritten if required. For example, the exposure index of the shot can be decreased to provide more detail for feature tracking if necessary.

De-Bayer: The de-bayering algorithm used to convert an Arri RAW file to RGB. Note that GPU-based de-bayering is not currently supported, and CPU performance of some de-bayering algorithms is faster than others.

Colour space: The colour space used when decoding.

Exposure index (ISO): The ISO exposure index used when decoding.

White balance (K): The colour temperature of the white balance used when decoding.

Tint: The green/magenta tint of the white balance used when decoding.

R: Reset any parameter back to the value specified in the source material file.

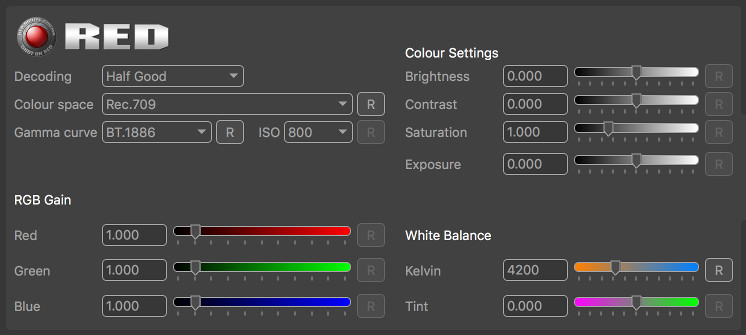

RED R3D Decoding

When reading RED R3D footage, various decoding parameters can be selected to choose between higher quality or faster decoding, along with using 8 or 16bit pixel data. Colour space and white balance can also be adjusted, along with exposure and brightness/contract controls.

Decoding: This menu provides choice between a slower but more accurate de-bayer algorithm (Full Premium) and faster algorithms such as Half Premium, Half Good and Quarter Good.

Colour space: The colour space used for decoding to be changed.

Gamma curve: The gamma profile when decoding.

ISO: The ISO value used when decoding.

Brightness: The brightness adjustment.

Contrast: The contrast adjustment.

Saturation: The saturation adjustment.

Exposure: The exposure adjustment.

RGB Gain: The gain parameters in the red, green and blue channels.

White balance: The colour temperature for white balance adjustment.

Tint: The overall tint of the white balance adjustment.

R: Reset any parameter back to the value specified in the RED R3D file.

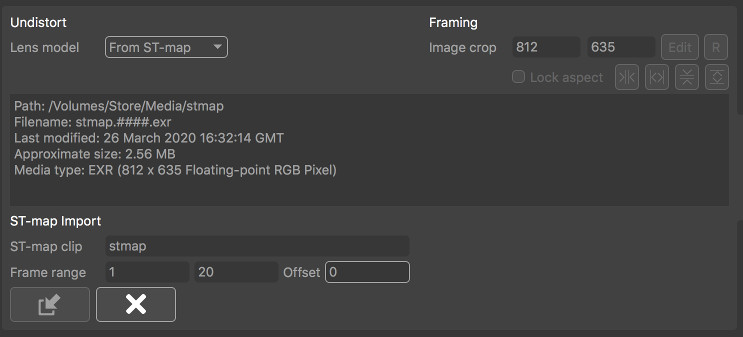

Undistort

The Undistort panel can be used to specify how lens distortion should be corrected for. It is also the place where OpenEXR ST-maps can be loaded from an external source and applied to the clip.

The Lens model menu is used to specify how to correct for lens distortion for this clip:

None: No lens distortion correction will be applied.

From Preset: If a camera preset is being used, selecting this option will take the lens distortion model from the camera preset and use it to undistort the clip. If the lens distortion model is constant, or the camera focal length is known at this time, the clip will be undistorted immediately. If not, lens distortion will be corrected for down-stream once the camera focal length is known (e.g. after the camera has been solved).

Automatic: When this option is selected, the amount of lens distortion will be estimated automatically and removed once the camera has been solved.

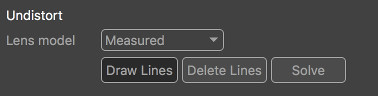

Measured: Selecting this option will enable the measurement tools and allow lens distortion parameters to be measured directly from the image (see below for further details). Once distortion has been measured, it will be removed from the clip immediately and the undistorted clip passed down-stream for tracking.

From Metadata: If lens distortion information is available in the media metadata, selecting this option will use it to correct for lens distortion immediately and pass the undistorted clip down-stream for tracking.

From ST-Map: Selecting this option will allow OpenEXR ST-maps to be loaded from an external source and applied immediately to the clip to remove lens distortion. The undistorted clip is then passed down-stream for tracking.

ST-Maps

In order to load an external OpenEXR ST-map from disk, select From ST-Map from the Distortion model menu. Media can be managed using the following buttons:

: Open the File Browser to load an ST-Map clip.

: Open the File Browser to load an ST-Map clip. : Clicking this button will remove the ST-Map from the Clip Input node (Note this will not affect any of the source material files on disk).

: Clicking this button will remove the ST-Map from the Clip Input node (Note this will not affect any of the source material files on disk).

If the frame-range of the ST-Map clip does not match the frame-range of the clip to be undistorted, the first and last frames of the ST-Map clip will be held. The resolution of the undistorted clip will be defined by the resolution of the ST-map clip. Note that this will affect the sensor area and field-of-view displayed in the Camera panel.

ST-map clip: This displays the name of the clip providing the ST-map, if available.

Frame range: This displays the first and last frame of the ST-map source material.

Offset: Shift the source material forwards or backwards by a specified number of frames.

Re-applying lens distortion

If lens distortion needs to be re-applied to a clip, instead of removed, this can still achieved in PFTrack using these tools. After tracking, use the Scene Export node to export ST-Maps suitable for both undistort and redistort.

Then, instead of using your redistort ST-Maps in a third-party composing application to re-apply lens distortion, just load your undistorted elements into a new Clip Input node, select From ST-Map as described above and load the ST-Maps generated for redistort.

This will recover images a the original frame size with lens distortion re-applied. These can then be exported down-stream using the Footage Export node.

Measured distortion correction

If no ST-maps or camera preset are available to provide information about lens distortion, the clip can still be undistorted directly in the Clip Input node provided the source material contains one or more straight lines that are distorted by the camera lens. For example, this might be a vertical line on the edge of a doorway, or the horizontal line of a brick wall.

In order to measure and correct for lens distortion, select Measured from the Distortion model menu. This will enable the Draw Lines button. Clicking this button will allow a straight line to be draw in the Cinema window along an image edge that should be straightened.

A line is drawn by:

- Clicking and releasing the left mouse button to place the start point of the line (1),

- Moving the mouse to the end point and clicking and releasing again (2).

Once the initial line is placed, additional vertices must be inserted into the line by clicking and dragging with the left mouse button (3). Each additional vertex should be positioned to trace alone the curve of the line that is to be straightened, as shown in the following image:

More than one line can be drawn if required, and lines can be placed in different frame of the clip. Holding the Shift key whilst positioning a point will display a window showing a closeup of the image pixels.

Clicking the Solve button will solve for lens distortion. If distortion can be corrected accurately, the line traced in the source material will be straightened as shown below:

Framing

After a clip has been undistorted, controls are available to adjust image cropping if required (Note: these options are not available when using an ST-map).

By default, a clip will undistort to the same resolution as the original frame. This means some pixels will be lost around the boundary of the image. Adjusting the image crop size will allow these pixels to be recovered if desired.

Please note: if you are wishing to export both undistort and redistort ST-maps in the Scene Export node, you should ensure that your image frame is expanded to the full extents of the undistorted image in both the horizontal and vertical directions to avoid losing any image data.

Image crop: This displays the current width and height of the undistorted image.

Edit: Selecting this button will allow the image crop area to be adjusted manually in the Cinema window by clicking and dragging on the frame boundary with the left mouse button.

R: Clicking this button will reset the image crop to match the original source material resolution.

Macroblock: Enabling this control will round the undistorted frame resolution up to the nearest 16x16 macroblock size. This can be useful when exporting undistorted clips in certain formats (such as H264 on Windows 10) to ensure the resolution is compatible with the codec.

Frame aspect: Enabling this control will ensure the original frame aspect ratio is maintained when adjusting the image crop.

The following buttons can be used to fit the image crop to the undistorted image data. These buttons will expand the image to the full undistorted extents, including any blank spacing around the image border:

: Clicking this button will fit to the full horizontal extents of the undistorted image.

: Clicking this button will fit to the full horizontal extents of the undistorted image. : Clicking this button will fit to the full vertical extents of the undistorted image.

: Clicking this button will fit to the full vertical extents of the undistorted image.

These buttons will expand the image to discard empty pixels at the image border:

: Clicking this button will fit horizontally to ensure no empty pixels are visible at the left and right-hand edges of the image.

: Clicking this button will fit horizontally to ensure no empty pixels are visible at the left and right-hand edges of the image. : Clicking this button will fit vertically to ensure no empty pixels are visible at the top and bottom edges of the image.

: Clicking this button will fit vertically to ensure no empty pixels are visible at the top and bottom edges of the image.

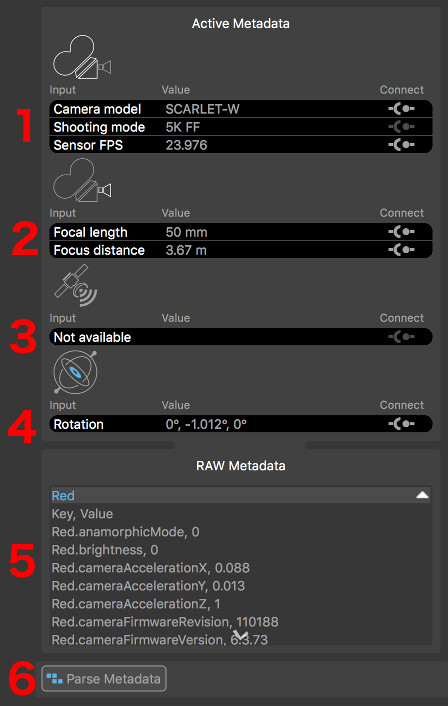

Metadata

Metadata is useful information recorded by a movie camera whilst shooting. It often comprises of data such as the camera focal length, the shooting mode, and may even contain information about how the camera is moving during the shot.

Metadata can often be used to help track shots more easily, especially when data such as the camera focal length is available. PFTrack currently supports metadata embedded in media files such as:

RED R3D files

ARRi RAW, Quicktime ProRes, DPX or OpenEXR files containing ARRI Metadata

OpenEXR files containing MXF metadata generated by the Sony RAW Viewer application.

If the source media contains metadata that can be used in PFTrack, it will be displayed in the Active Metadata window at the top-left of the PFTrack interface.

Active Metadata Window

The Active Metadata Window appears at the top-left of the UI, in place of the Workpage window. The top four sections contain important information that PFTrack has identified and is able to use:

Each section displays the name of the camera parameter in the Input column, the metadata value in the Value column, and has a Connect toggle  on the right.

on the right.

(1): Camera Body, containing information related to the camera body and sensor.

(2): Camera Lens, containing information related to the camera lens.

(3): GPS Position, containing information related to the GPS position.

(4): IMU Data, containing information captured by on-camera IMU devices.

Note that not all file formats support all types of metadata. If no metadata is available that is relevant to each section, it will be displayed as Not available.

The full list of all metadata in the media is shown in the RAW Metadata section (5). The important values that PFTrack has identified are highlighted in blue. Individual sections of the RAW Metadata list can be opened and closed using the white arrow buttons to the right of each section title. All RAW metadata can be accessed down-stream from the Clip Input node using Python scripting if required.

Further information about how to tell PFTrack which metadata is important for your media is available in the Metadata Tags section.

Parsing Metadata

Many types of metadata may vary from frame-to-frame (for example, the camera focal length, or the IMU rotation). Before this metadata can be used, the entire clip must be processed so that the metadata values for each frame can be parsed and analysed. This is achieved by clicking the Parse Metadata button (6).

Once metadata has been parsed, if information such as the camera focal length has been identified, it will be displayed in the appropriate section of the Active Metadata Window.

Camera Body Metadata

Camera body metadata generally comprises of information used to help identify the size of the camera sensor (or film back). This information is essential to PFTrack when using camera focal lengths measured in real-world values such as millimeters, as it helps PFTrack to convert a focal length in millimeters into a horizontal or vertical field of view.

MXF metadata generated by the Sony RAW Viewer application (and supported by PFTrack via OpenEXR files) contains the exact sensor size used by the camera. All other supported metadata formats only contain information such as the camera model and shooting mode, which are used as hints to help PFTrack identify a suitable sensor size from a fixed set of presets.

Further information about the relationship between focal length and sensor size is available in the Camera section.

Camera Lens Metadata

Camera lens metadata typically comprises of information such as the focal length and focus distance. In order to use the camera focal length correctly, PFTrack must also know the camera's sensor size.

Further information about the relationship between focal length and sensor size is available in the Camera section.

GPS Position

GPS position metadata comprises of data specifying the latitude, longitude, and possibly altitude of the camera.

IMU Data

In addition to GPS metadata describing the camera position, IMU data may be available that describes the camera orientation. This may come from either the camera body, or the camera lens depending on the types of camera and lens being used.

Connecting Metadata

If metadata is available to use, it must be connected to PFTrack's virtual camera in order to assist with any operations such as camera tracking. This is achieved by clicking the Connect toggles next to each piece of metadata.

Due to the nature of how metadata is often recorded by the camera, the values present in the files may not always be as accurate as is needed for the purposed of matchmoving. For example, there may be a lag between recording the image frame and the focal length of the camera at that exact time, or there may be quantization or calibration issues with the recording devices. Because of these inherent problems, PFTrack is able to use metadata as either a hint or an exact value.

Clicking the Connect toggles will cycle between three states:

: When in this state, metadata is not connected and does not affect the camera (this is the default state).

: When in this state, metadata is not connected and does not affect the camera (this is the default state).

: This state indicates the metadata value is to be used as a Hint to the camera.

: This state indicates the metadata value is to be used as a Hint to the camera.

: This state indicated the metadata value is to be used as an Exact value in the camera.

: This state indicated the metadata value is to be used as an Exact value in the camera.

Not all states are available for each camera input. Further information on how metadata affects the camera in PFTrack is available in the Camera section.

PFCapture

On macOS, metadata stored in Quicktime files generated by The Pixel Farm's PFCapture iOS app is also supported. This provides the camera field of view and orientation information, along with GPS position.

Note that when transferring a PFCapture movie file off your iPad, please ensure you use software that copies the original movie file in its entirety onto your Mac (such as the macOS ImageCapture application). This will ensure metadata is kept. Software that transcodes the original movie file instead of copying it may remove metadata during this process.

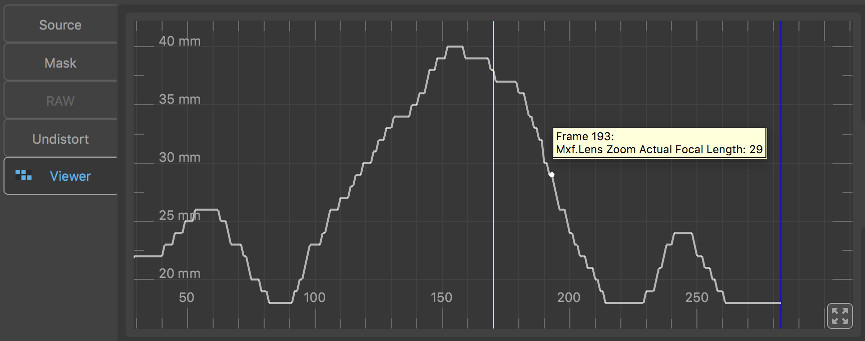

Metadata Viewer

The Metadata Viewer panel can be used to display a graph showing the values of metadata parameters which vary over the shot, such as camera focal length, focus distance, or IMU rotation data. This can be useful to check for any potential issues with the metadata values before connecting them to the camera.

To display a metadata parameter, select it in the Active Metadata Window. The graph display will remain centered on the current frame. As the mouse is moved over the graph, a floating indicator will appear showing the metadata parameter name and value.

To fit the graph display to the metadata curve, click the  button.

button.

Mouse controls

Click and drag the yellow frame marker with the left mouse button to change frames.

Click and drag with the middle mouse button to zoom the graph horizontally or vertically.

Click and drag with the right mouse button to scroll the graph vertically.

Camera

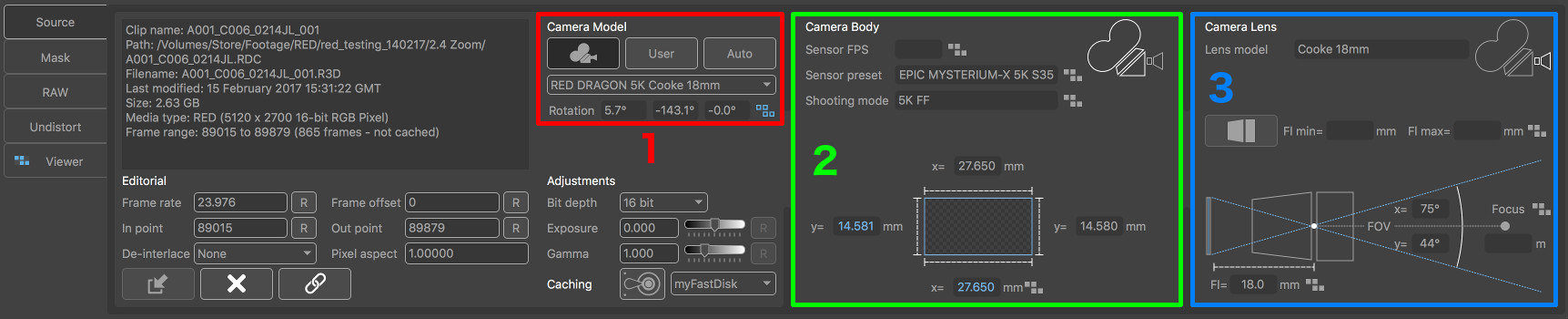

In order to perform any matchmoving tasks, PFTrack must build a Virtual Camera Model that represents (as closely as possible) the real camera that was used to capture the shot.

This virtual camera model can be constructed in various ways, and there are three important areas of the Clip Input UI which area used to define it:

(1): The Camera Model section options.

(2): The Camera Body parameters.

(3): The Camera Lens parameters.

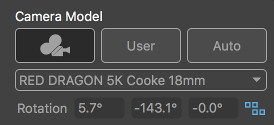

Camera Model

The Camera Model options are used to specify how to build the virtual camera model in PFTrack. There are three options available, each of which provides different levels of control over the camera body and lens parameters:

: This is the Preset mode, and allows a pre-built camera preset to be selected from the preset menu below the buttons. If no preset is available that can be used for your media (e.g because of frame resolution or aspect ratio differences) then this button will not be available.

: This is the Preset mode, and allows a pre-built camera preset to be selected from the preset menu below the buttons. If no preset is available that can be used for your media (e.g because of frame resolution or aspect ratio differences) then this button will not be available. : Enabling this option selects the User mode, and allows the individual camera parameters to be manually specified by the user. User model is the default setting.

: Enabling this option selects the User mode, and allows the individual camera parameters to be manually specified by the user. User model is the default setting. : Enabling this option switches the camera into Auto mode. This mode can be used when no information is known about the camera, and no metadata is available to assist. When in Auto mode, all camera parameters will be estimated automatically.

: Enabling this option switches the camera into Auto mode. This mode can be used when no information is known about the camera, and no metadata is available to assist. When in Auto mode, all camera parameters will be estimated automatically.

Underneath the buttons is the Preset Menu where a preset can be chosen when using the Preset mode. Information about the camera rotation is also displayed if metadata has been connected:

Further information about connecting metadata to the virtual camera is available in the Metadata section.

Preset Mode

When PFTrack attempts to track the camera, it must estimate various camera parameters such as the position and orientation at each frame, along with the camera focal length and lens distortion if necessary. This is a complicated process, and as such it helps to specify as much information about the camera as possible beforehand.

The Preset mode provides the most reliable approach to matchmoving, because camera presets contain information about the camera lens and distortion that is used directly during the matchmoving process.

When Preset mode is enabled, camera lens metadata can still be used to describe the focal length when using a zoom lens. Hints about camera rotation and focus distance can also be provided if appropriate metadata is available.

Further information on how to build camera presets is available in the Camera Presets section.

User Mode

If a camera preset is not available, enabling the User mode will allow information about the camera sensor size and focal length to be entered manually. Camera and lens metadata can also be used, if it is available.

When using the User mode, information about the camera such as lens focal length or lens distortion can still be estimated automatically by PFTrack if necessary.

Please note that it is important to ensure data is entered correctly. For example, there is an important link between the camera sensor size, the lens focal length, and the field of view of the virtual camera that PFTrack is using. If either the sensor size or focal length values are incorrect, the field of view of the virtual camera will not match that of the real camera and this may cause problems during the matchmoving process. Significant errors may in fact prevent the camera being tracked entirely.

Auto Mode

If information about the camera sensor size or lens focal length is not available, or you are uncertain about the accuracy of the data, then the Auto mode should be enabled.

When using the Auto mode, a default sensor size will be selected for the shot and all parameters of the camera (such as lens distortion and focal length) will be estimated automatically by PFTrack during the matchmoving process.

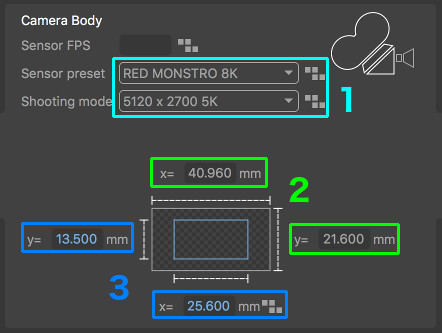

Camera Body

The Camera Body panel contains information related to the camera sensor and shooting mode, and is available when using either the Preset or User mode.

When User mode is enabled, the Sensor preset and Shooting mode menus (1) these menus can be used to select a suitable sensor preset for your clip. This sensor preset defines the overall size of the sensor (2), and the actual area of the sensor used to capture the image (3).

The state of the sensor size values are colour-coded to indicate their reliability:

Red: indicates that the parameter has not been set, or is unknown. These parameters must be set before the virtual camera mode can be completed.

Orange: when reading values from metadata, this indicates that the value has been estimated, rather than read directly. Parameters with this colour should be checked to make sure the data is suitable before continuing.

This colour coding is reflected in the virtual camera and frustum displayed in the Viewer Window.

Windowed and Scaled sensors

Note that the full sensor size of your camera and the sensor area used to capture the footage may be different if the camera used to capture the footage has different shooting modes that can be selected.

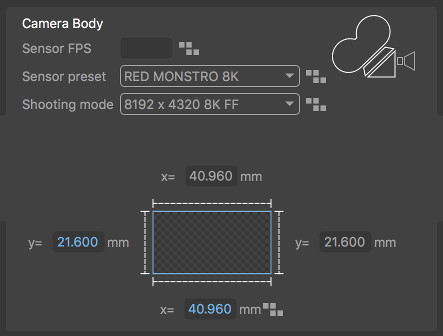

For example, a RED MONSTRO 8K sensor has an overall size of 40.96 x 21.6 mm. When shooting at 8K 8192 x 4320, the full sensor area is used:

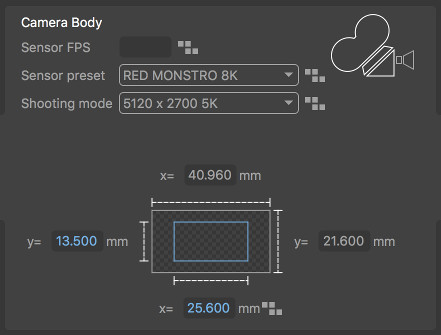

However, as this is a windowed sensor, when shooting in 5K mode at 5120 x 2700, only part of the sensor is exposed, and this area is 25.6 x 13.5 mm:

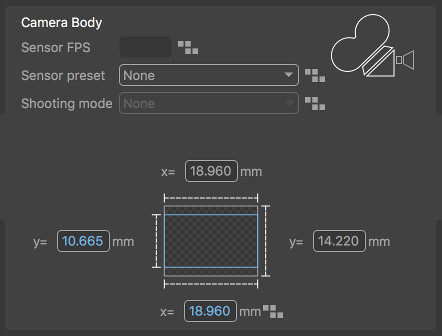

Other cameras may use a scaled sensor for different shooting modes. In these cases, generally the top and bottom of the frame are cropped to ensure the aspect ratio of the frame fits to the full sensor width. For example, a camera may have a full sensor with an aspect ratio of 4:3, and a sensor size of 18.96 x 14.22mm. When shooting in HD mode at 1920 x 1080, if the sensor is scaled then the correct sensor area must actually be 18.96 x 10.665 mm to match the 16:9 aspect ratio of the frame:

It is important to ensure that this information is entered accurately for your shot, as entering the wrong value will affect the field of view of the virtual camera model that PFTrack is using. This data is specific to your camera, so if sensor presets are not available for your particular camera, please check with your camera documentation to identify the type of sensor being used and the correct sensor area for your shooting mode.

Manually Generating Sensor Presets

If you wish to create a camera body sensor preset for a sensor that is not available in the Sensor preset list, you can do this easily by creating a small XML file. This will be loaded automatically each time PFTrack runs, Further information about sensor preset files is available here.

Camera Lens

The Camera Lens panel contains information related to the camera lens, and the virtual camera model that PFTrack is using. The type of camera lens can be specified using the following buttons:

: When this button is active, a Prime lens is used, with a single focal length value for the entire shot.

: When this button is active, a Prime lens is used, with a single focal length value for the entire shot.

: When this button is active, a Zoom lens is used, where the focal length is changing over the shot.

: When this button is active, a Zoom lens is used, where the focal length is changing over the shot.

If focal length metadata is available and has been connected, the type of lens and focal lengths will be updated automatically, and the metadata indicators next to each value will show whether the metadata is being used exactly as specified or as a hint.

Prime Lenses

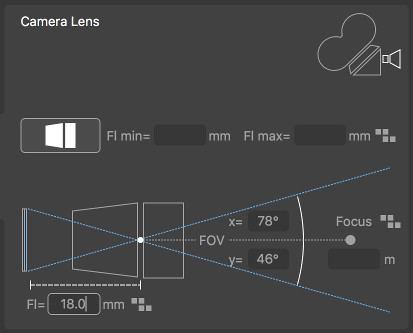

Prime lenses have a fixed focal length. If this focal length is known, it can be entered into the Focal Length (Fl) edit box. Once the focal length is entered, the field of view of the virtual camera used by PFTrack will also be displayed.

Zoom Lenses

Zoom lenses are able to change focal length over the course of the shot. Focal length will vary between the minimum (Fl min) and maximum (Fl max) values, which must be entered in the edit boxes.

Focus Distance

If camera focus distance metadata is available and has been connected, it will be displayed here. This can be used to help scale the shot using the Orient Camera node once the camera has been tracked.

Connected Metadata

When metadata is available in the loaded media, it can be connected to the virtual camera model using the Connect toggles in the Active MetaData Window.

As metadata is connected, the metadata indicators next to each parameter will change according to how the metadata is being used:

: This indicates that no metadata is connected to the camera parameter.

: This indicates that no metadata is connected to the camera parameter.

: This indicates that metadata is connected, but is potentially unreliable and therefore only being used as a hint.

: This indicates that metadata is connected, but is potentially unreliable and therefore only being used as a hint.

: This shows that metadata is connected and the metadata value is being used exactly as specified in the media.

: This shows that metadata is connected and the metadata value is being used exactly as specified in the media.