Attach Z-Channel

Note: this node requires media provided by an external depth-sensor device used to capture z-depth data as well as RGB image data. The Pixel Farm's PFCapture app is available for free from the Apple iOS app store for capturing such data with an iPad, and is compatible with the Occipital Structure Sensor.

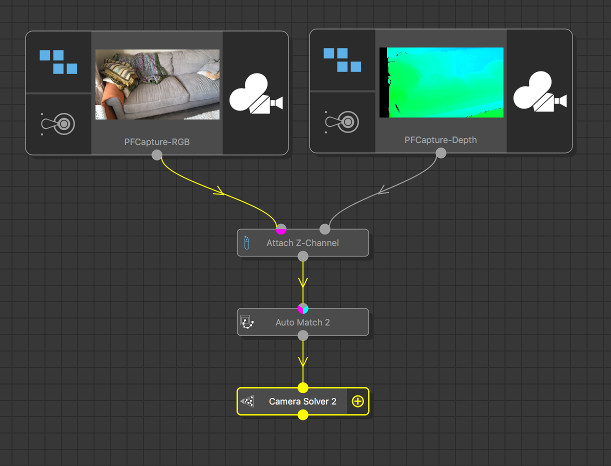

This node can be used to decode z-depth information from a secondary clip, and attach it to another RGB clip as a z-channel. Once a z-depth channel has been attached to an RGB clip, z-depth data will be passed down-stream for use in other nodes. The Auto Match, Auto Track, and User Track nodes will take advantage of this data by recording a depth value for each tracker in each frame. This information can then be used by the Camera Solver node to help track camera motion and set a real-world scale for the camera path.

On macOS, Quicktime movies recorded by PFCapture will also contain metadata recorded by the app that can be used to set the overall camera orientation. Note that when transferring a PFCapture movie file off your iPad, please ensure you use software that copies the original movie file in its entirety onto your Mac (such as the macOS ImageCapture application) in order to ensure metadata is kept. Software that re-renders the file instead of copying it may remove the metadata during this process.

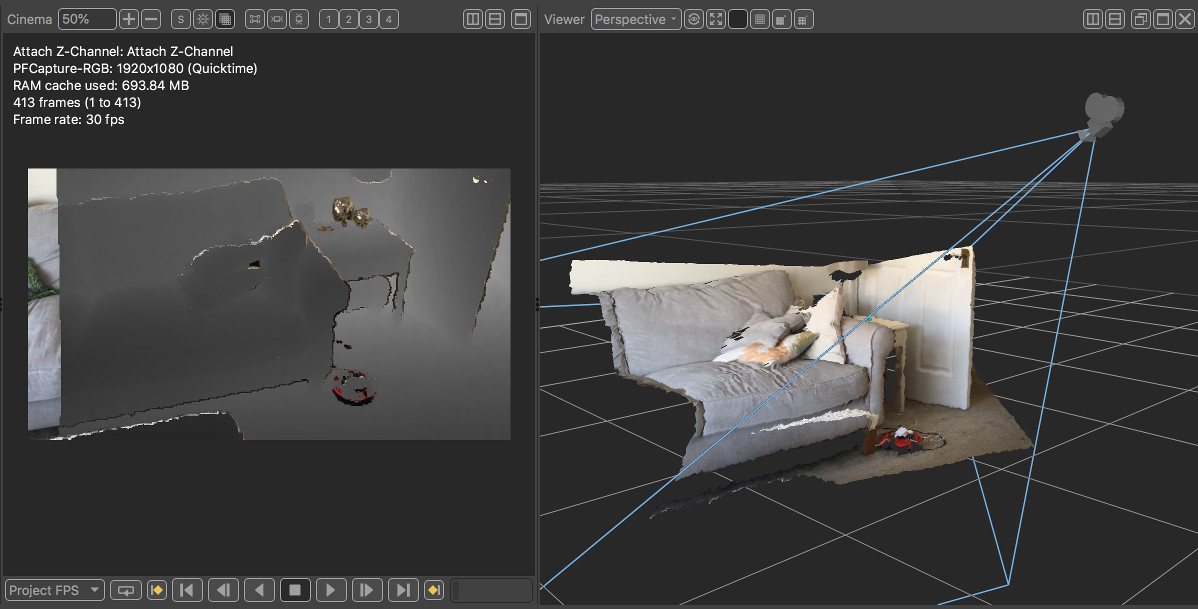

Note that the per-pixel z-depth information provided by the secondary z-depth clip must be pre-aligned to the image data RGB clip. An example of an aligned RGB and z-channel is show below, recorded using The Pixel Farm's PFCapture iOS app and an Occipital Structure Sensor:

Usage

Attaching Depth Data

The following tree illustrates how the Attach Z-Channel node can be used to attach z-depth data recorded by The Pixel Farm's PFCapture iOS app to an RGB image:

The source of the z-depth data must first be specified using the Depth source menu. Data from the specified channel(s) is then converted to a z-depth value at each pixel using the following conventions:

Red, Green, Blue, Alpha

For these channels, a depth value is read directly from either the red, green, blue or alpha components of the image.

If the Normalized option is enabled, the pixel value is assumed to be normalised to lie between the near and far clip planes (otherwise it is assumed to represent a true depth value).

For normalised depth values, the Linear option specifies whether the pixel values are mapped linearly between the near and far planes, or whether they represent values captured from a GPU depth buffer. The Inverted option can also be used to invert the relationship between pixel values and the near/far planes.

OpenEXR 'Z'

When this option is chosen for the depth channel, z-depth values will be extracted from the 'Z' channel in the secondary OpenEXR clip.

If the Normalized option is enabled, the pixel value is assumed to be normalised to lie between the near and far clip planes (otherwise it is assumed to represent a true floating-point depth value).

For normalised depth values, the Linear option specifies whether the pixel values are mapped linearly between the near and far planes, or whether they represent values captured from a GPU depth buffer. The Inverted option can also be used to invert the relationship between pixel values and the near/far planes.

PFCapture

When this option is chosen for the depth channel, z-depth values will be decoded from the hue component of the red, green and blue channels. This is currently only supported for clips recorded using the Pixel Farm PFCapture iOS app, available for free from Apple's iOS app store.

Viewing Depth Data

Once depth data is attached to the primary clip, it can be viewed in the Viewer windows provided a camera is available from up-stream. The depth data is rendered as a coloured triangular mesh, with colour taken from the RGB channels of the primary clip. Various controls are available for displaying the data, as described below.

Using Depth Data in the Tree

Once the z-depth channel is attached to the clip, an Auto Track or User Track node can be used to track features. Because z-depth data is now available, a z-depth value will be assigned to each tracker in each frame, and used by the Camera Solver node to help track the camera. This will also set a real-world scale according to the units used to define depth in the z-channel input clip. For data recorded by The Pixel Farm's PFCapture iOS app, one unit corresponds to one metre. Additional metadata recorded by the iPad will also be used to orient the camera correctly.

Controls

Depth

Depth source: The source of the depth data in the second input clip. Options are Red, Green, Blue or Alpha to take z-depth information from either the red, green, blue or alpha channels; PFCapture, which will convert the hue value of the RGB pixel colour into a depth value from clips captured by The Pixel Farm's PFCapture iOS app; or OpenEXR 'Z' which will take depth information from a channel named 'Z' in an OpenEXR clip.

Frame offset: The offset applied to the current frame number that is used to read a frame from the secondary z-depth clip. For example, if this value is set to 1 then z-depth data for frame 100 in the RGB clip will be read from frame 101 in the z-depth clip.

Near clip plane: The value of the near clipping plane.

Far clip plane: The value of the far clipping plane.

Scale factor: The scale factor applied to the z-depth pixel data that is applied when converting a pixel value to a z-depth value.

Black level: The minimum black level above which a pixel is considered to contain a valid depth value. Note that this edit box is only available when using the PFCapture channel source.

Normalized: The pixel values stored in the secondary clip contain normalised values.

Linear: The pixel values stored in the secondary clip represent linear depth values. Note that this option is only available for normalised data.

Inverted: The pixel values stored in the secondary clip contain inverted depth values. Note that this option is only available for normalised data.

Display

Viewer proxy: The resolution used to display triangular meshes in Viewer windows. For high resolution images, selecting Half, Third or Quarter will increase rendering performance.

Transparency %: The amount of transparency that is used to display a grey-scale depth map in the Cinema window.

Grey-scale gamma: The amount of gamma correction that is used to display a grey-scale depth map in the Cinema window.

Depth cut %: The difference in depth values (as a percentage of the distance between the near and far camera planes) at which triangular mesh edges will be cut when displaying the depth map in 3D viewer windows. Note that this option is for display purposes only and does not affect the depth map being passed down-stream from the node.

Show ground: When enabled, the ground-plane will be displayed.

Show horizon: When enabled, the horizon line will be displayed.

Show trackers: When enabled, the solved tracking points will be displayed in the Viewer windows.

Show geometry: When enabled, the geometric mesh objects will be displayed in the Viewer windows.

Show depth map: When enabled, a grey-scale depth map will be displayed in the Cinema window if one is available for the current frame.

App Store and iPad are trademarks of Apple Inc., registered in the U.S. and other countries.

Structure is a trademark of Occipital, Inc., registered in the U.S.